Fri, 25 December 2015

Today's episode is a reading of Isaac Asimov's The Machine that Won the War. I can't think of a story that's more appropriate for Data Skeptic. |

||

Fri, 18 December 2015

In this interview with Aaron Halfaker of the Wikimedia Foundation, we discuss his research and career related to the study of Wikipedia. In his paper The Rise and Decline of an open Collaboration Community, he highlights a trend in the declining rate of active editors on Wikipedia which began in 2007. I asked Aaron about a variety of possible hypotheses for the phenomenon, in particular, how automated quality control tools that revert edits automatically could play a role. This lead Aaron and his collaborators to develop Snuggle, an optimized interface to help Wikipedians better welcome new comers to the community. We discuss the details of these topics as well as ORES, which provides revision scoring as a service to any software developer that wants to consume the output of their machine learning based scoring. You can find Aaron on Twitter as @halfak.

Direct download: wikipedia-revision-scoring-as-a-service.mp3

Category:general -- posted at: 6:30am PDT |

||

Fri, 11 December 2015

Today's topic is term frequency inverse document frequency, which is a statistic for estimating the importance of words and phrases in a set of documents. |

||

Fri, 4 December 2015

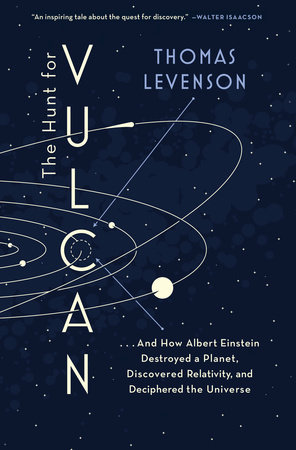

Early astronomers could see several of the planets with the naked eye. The invention of the telescope allowed for further understanding of our solar system. The work of Isaac Newton allowed later scientists to accurately predict Neptune, which was later observationally confirmed exactly where predicted. It seemed only natural that a similar unknown body might explain anomalies in the orbit of Mercury, and thus began the search for the hypothesized planet Vulcan. Thomas Levenson's book "The Hunt for Vulcan" is a narrative of the key scientific minds involved in the search and eventual refutation of an unobserved planet between Mercury and the sun. Thomas joins me in this episode to discuss his book and the fascinating story of the quest to find this planet. During the discussion, we mention one of the contributions made by Urbain-Jean-Joseph Le Verrier which involved some complex calculations which enabled him to predict where to find the planet that would eventually be called Neptune. The calculus behind this work is difficult, and some of that work is demonstrated in a Jupyter notebook I recently discovered from Paulo Marques titled The-Body Problem.

|

||

Fri, 27 November 2015

Today's episode discusses the accuracy paradox. There are cases when one might prefer a less accurate model because it yields more predictive power or better captures the underlying causal factors describing the outcome variable you are interested in. This is especially relevant in machine learning when trying to predict rare events. We discuss how the accuracy paradox might apply if you were trying to predict the likelihood a person was a bird owner. |

||

Fri, 20 November 2015

... or should this have been called data science from a neuroscientist's perspective? Either way, I'm sure you'll enjoy this discussion with Laurie Skelly. Laurie earned a PhD in Integrative Neuroscience from the Department of Psychology at the University of Chicago. In her life as a social neuroscientist, using fMRI to study the neural processes behind empathy and psychopathy, she learned the ropes of zooming in and out between the macroscopic and the microscopic -- how millions of data points come together to tell us something meaningful about human nature. She's currently at Metis Data Science, an organization that helps people learn the skills of data science to transition in industry. In this episode, we discuss fMRI technology, Laurie's research studying empathy and psychopathy, as well as the skills and tools used in common between neuroscientists and data scientists. For listeners interested in more on this subject, Laurie recommended the blogs Neuroskeptic, Neurocritic, and Neuroecology. We conclude the episode with a mention of the upcoming Metis Data Science San Francisco cohort which Laurie will be teaching. If anyone is interested in applying to participate, they can do so here. |

||

Fri, 13 November 2015

A discussion of the expected number of cars at a stoplight frames today's discussion of the bias variance tradeoff. The central ideal of this concept relates to model complexity. A very simple model will likely generalize well from training to testing data, but will have a very high variance since it's simplicity can prevent it from capturing the relationship between the covariates and the output. As a model grows more and more complex, it may capture more of the underlying data but the risk that it overfits the training data and therefore does not generalize (is biased) increases. The tradeoff between minimizing variance and minimizing bias is an ongoing challenge for data scientists, and an important discussion for skeptics around how much we should trust models. |

||

Fri, 6 November 2015

The recent opinion piece Big Data Doesn't Exist on Tech Crunch by Slater Victoroff is an interesting discussion about the usefulness of data both big and small. Slater joins me this episode to discuss and expand on this discussion. Slater Victoroff is CEO of indico Data Solutions, a company whose services turn raw text and image data into human insight. He, and his co-founders, studied at Olin College of Engineering where indico was born. indico was then accepted into the "Techstars Accelarator Program" in the Fall of 2014 and went on to raise $3M in seed funding. His recent essay "Big Data Doesn't Exist" received a lot of traction on TechCrunch, and I have invited Slater to join me today to discuss his perspective and touch on a few topics in the machine learning space as well. |

||

Fri, 30 October 2015

The degree to which two variables change together can be calculated in the form of their covariance. This value can be normalized to the correlation coefficient, which has the advantage of transforming it to a unitless measure strictly bounded between -1 and 1. This episode discusses how we arrive at these values and why they are important. |

||

Fri, 23 October 2015

Today's guest is Cameron Davidson-Pilon. Cameron has a masters degree in quantitative finance from the University of Waterloo. Think of it as statistics on stock markets. For the last two years he's been the team lead of data science at Shopify. He's the founder of dataoragami.net which produces screencasts teaching methods and techniques of applied data science. He's also the author of the just released in print book Bayesian Methods for Hackers: Probabilistic Programming and Bayesian Inference, which you can also get in a digital form. This episode focuses on the topic of Bayesian A/B Testing which spans just one chapter of the book. Related to today's discussion is the Data Origami post The class imbalance problem in A/B testing. Lastly, Data Skeptic will be giving away a copy of the print version of the book to one lucky listener who has a US based delivery address. To participate, you'll need to write a review of any site, book, course, or podcast of your choice on datasciguide.com. After it goes live, tweet a link to it with the hashtag #WinDSBook to be given an entry in the contest. This contest will end November 20th, 2015, at which time I'll draw a single randomized winner and contact them for delivery details via direct message on Twitter. |

||

Fri, 16 October 2015

The central limit theorem is an important statistical result which states that typically, the mean of a large enough set of independent trials is approximately normally distributed. This episode explores how this might be used to determine if an amazon parrot like Yoshi produces or or less waste than an African Grey, under the assumption that the individual distributions are not normal. |

||

Fri, 9 October 2015

Today's guest is Chris Hofstader (@gonz_blinko), an accessibility researcher and advocate, as well as an activist for causes such as improving access to information for blind and vision impaired people. His background in computer programming enabled him to be the leader of JAWS, a Windows program that allowed people with a visual impairment to read their screen either through text-to-speech or a refreshable braille display. He's the Managing Member of 3 Mouse Technology. He's also a frequent blogger primarily at chrishofstader.com. For web developers and site owners, Chris recommends two tools to help test for accessibility issues: tenon.io and dqtech.co. A guest post from Chris appeared on the Skepchick blogged titled Skepticism and Disability which lead to the formation of the sister site Skeptibility. In a discussion of skepticism and favorite podcasts, Chris mentioned a number of great shows, most notably The Pod Delusion to which he was a contributor. Additionally, Chris has also appeared on The Atheist Nomads. Lastly, a shout out from Chris to musician Shelley Segal whom he hosted just before the date of recording of this episode. Her music can be found on her site or via bandcamp. |

||

Fri, 2 October 2015

The multi-armed bandit problem is named with reference to slot machines (one armed bandits). Given the chance to play from a pool of slot machines, all with unknown payout frequencies, how can you maximize your reward? If you knew in advance which machine was best, you would play exclusively that machine. Any strategy less than this will, on average, earn less payout, and the difference can be called the "regret". You can try each slot machine to learn about it, which we refer to as exploration. When you've spent enough time to be convinced you've identified the best machine, you can then double down and exploit that knowledge. But how do you best balance exploration and exploitation to minimize the regret of your play? This mini-episode explores a few examples including restaurant selection and A/B testing to discuss the nature of this problem. In the end we touch briefly on Thompson sampling as a solution. |

||

Fri, 25 September 2015

Our episode this week begins with a correction. Back in episode 28 (Monkeys on Typewriters), Kyle made some bold claims about the probability that monkeys banging on typewriters might produce the entire works of Shakespeare by chance. The proof shown in the show notes turned out to be a bit dubious and Dave Spiegel joins us in this episode to set the record straight. In addition to that, our discussion explores a number of interesting topics in astronomy and astrophysics. This includes a paper Dave wrote with Ed Turner titled "Bayesian analysis of the astrobiological implications of life's early emergence on Earth" as well as exoplanet discovery. |

||

Thu, 17 September 2015

There are several factors that are important to selecting an appropriate sample size and dealing with small samples. The most important questions are around representativeness - how well does your sample represent the total population and capture all it's variance? Linhda and Kyle talk through a few examples including elections, picking an Airbnb, produce selection, and home shopping as examples of cases in which the amount of observations one has are more or less important depending on how complex the underlying system one is observing is. |

||

Fri, 11 September 2015

There's an old adage which says you cannot fit a model which has more parameters than you have data. While this is often the case, it's not a universal truth. Today's guest Jake VanderPlas explains this topic in detail and provides some excellent examples of when it holds and doesn't. Some excellent visuals articulating the points can be found on Jake's blog Pythonic Perambulations, specifically on his post The Model Complexity Myth. We also touch on Jake's work as an astronomer, his noteworthy open source contributions, and forthcoming book (currently available in an Early Edition) Python Data Science Handbook. |

||

Fri, 4 September 2015

There are many occasions in which one might want to know the distance or similarity between two things, for which the means of calculating that distance is not necessarily clear. The distance between two points in Euclidean space is generally straightforward, but what about the distance between the top of Mount Everest to the bottom of the ocean? What about the distance between two sentences? This mini-episode summarizes some of the considerations and a few of the means of calculating distance. We touch on Jaccard Similarity, Manhattan Distance, and a few others. |

||

Fri, 28 August 2015

ContentMine is a project which provides the tools and workflow to convert scientific literature into machine readable and machine interpretable data in order to facilitate better and more effective access to the accumulated knowledge of human kind. The program's founder Peter Murray-Rust joins us this week to discuss ContentMine. Our discussion covers the project, the scientific publication process, copywrite, and several other interesting topics. |

||

Thu, 20 August 2015

Today's mini-episode explains the distinction between structured and unstructured data, and debates which of these categories best describe recipes. |

||

Fri, 14 August 2015

Yusan Lin shares her research on using data science to explore the fashion industry in this episode. She has applied techniques from data mining, natural language processing, and social network analysis to explore who are the innovators in the fashion world and how their influence effects other designers. If you found this episode interesting and would like to read more, Yusan's papers Text-Generated Fashion Influence Model: An Empirical Study on Style.com and The Hidden Influence Network in the Fashion Industry are worth reading. |

||

Fri, 7 August 2015

![[MINI] PageRank [MINI] PageRank](http://assets.libsyn.com/content/9545086)

PageRank is the algorithm most famous for being one of the original innovations that made Google stand out as a search engine. It was defined in the classic paper The Anatomy of a Large-Scale Hypertextual Web Search Engine by Sergey Brin and Larry Page. While this algorithm clearly impacted web searching, it has also been useful in a variety of other applications. This episode presents a high level description of this algorithm and how it might apply when trying to establish who writes the most influencial academic papers. |

||

Wed, 29 July 2015

In this episode, Benjamin Uminsky enlightens us about some of the ways the Los Angeles County Registrar-Recorder/County Clerk leverages data science and analysis to help be more effective and efficient with the services and expectations they provide citizens. Our topics range from forecasting to predicting the likelihood that people will volunteer to be poll workers. Benjamin recently spoke at Big Data Day LA. Videos have not yet been posted, but you can see the slides from his talk Data Mining Forecasting and BI at the RRCC if this episode has left you hungry to learn more. During the show, Benjamin encouraged any Los Angeles residents who have some time to serve their community consider becoming a pollworker. |

||

Thu, 23 July 2015

![[MINI] k-Nearest Neighbors [MINI] k-Nearest Neighbors](http://assets.libsyn.com/content/9470577)

This episode explores the k-nearest neighbors algorithm which is an unsupervised, non-parametric method that can be used for both classification and regression. The basica concept is that it leverages some distance function on your dataset to find the $k$ closests other observations of the dataset and averaging them to impute an unknown value or unlabelled datapoint. |

||

Fri, 17 July 2015

How do people think rationally about small probability events? What is the optimal statistical process by which one can update their beliefs in light of new evidence? This episode of Data Skeptic explores questions like this as Kyle consults a cast of previous guests and experts to try and answer the question "What is the probability, however small, that Bigfoot is real?" |

||

Thu, 9 July 2015

This mini-episode is a high level explanation of the basic idea behind MapReduce, which is a fundamental concept in big data. The origin of the idea comes from a Google paper titled MapReduce: Simplified Data Processing on Large Clusters. This episode makes an analogy to tabulating paper voting ballets as a means of helping to explain how and why MapReduce is an important concept. |

||

Fri, 3 July 2015

The Credible Hulk joins me in this episode to discuss a recent blog post he wrote about glyphosate and the data about how it's introduction changed the historical usage trends of other herbicides. Links to all the sources and references can be found in the blog post. In this discussion, we also mention the food babe and Last Thursdayism which may be worth some further reading. Kyle also mentioned the list of ingredients or chemical composition of a banana. Credible Hulk mentioned the Mommy PhD facebook page. An interesting article about Mommy PhD can be found here. Lastly, if you enjoyed the show, please "Like" the Credible Hulk facebook group. |

||

Fri, 26 June 2015

More features are not always better! With an increasing number of features to consider, machine learning algorithms suffer from the curse of dimensionality, as they have a wider set and often sparser coverage of examples to consider. This episode explores a real life example of this as Kyle and Linhda discuss their thoughts on purchasing a home. The curse of dimensionality was defined by Richard Bellman, and applies in several slightly nuanced cases. This mini-episode discusses how it applies on machine learning. This episode does not, however, discuss a slightly different version of the curse of dimensionality which appears in decision theoretic situations. Consider the game of chess. One must think ahead several moves in order to execute a successful strategy. However, thinking ahead another move requires a consideration of every possible move of every piece controlled, and every possible response one's opponent may take. The space of possible future states of the board grows exponentially with the horizon one wants to look ahead to. This is present in the notably useful Bellman equation.

Direct download: MINI_The_Curse_of_Dimensionality.mp3

Category:miniepisode -- posted at: 12:01am PDT |

||

Fri, 19 June 2015

This episode discusses video game analytics with guest Anders Drachen. The way in which people get access to games and the opportunity for game designers to ask interesting questions with data has changed quite a bit in the last two decades. Anders shares his insights about the past, present, and future of game analytics. We explore not only some of the innovations and interesting ways of examining user experience in the gaming industry, but also touch on some of the exciting opportunities for innovation that are right on the horizon. You can find more from Anders online at andersdrachen.com, and follow him on twitter @andersdrachen |

||

Fri, 12 June 2015

![[MINI] Anscombe's Quartet [MINI] Anscombe's Quartet](http://assets.libsyn.com/content/9177781)

This mini-episode discusses Anscombe's Quartet, a series of four datasets which are clearly very different but share some similar statistical properties with one another. For example, each of the four plots has the same mean and variance on both axis, as well as the same correlation coefficient, and same linear regression.

The episode tries to add some context by imagining each of these datasets as data about a sports team, and why it can be important to look beyond basic summary statistics when exploring your dataset. |

||

Mon, 8 June 2015

A recent episode of the Skeptics Guide to the Universe included a slight rant by Dr. Novella and the rouges about a shortcoming in operating systems. This episode explores why such a (seemingly obvious) flaw might make sense from an engineering perspective, and how data science might be the solution. In this solo episode, Kyle proposes the concept of "annoyance mining" - the idea that with proper logging and enough feedback, data scientists could be provided the right dataset from which they can detect flaws and annoyances in software and other systems and automatically detect potential bugs, flaws, and improvements which could make those systems better. As system complexity grows, it seems that an abstraction like this might be required in order to keep maintaining an effective development cycle. This episode is a bit of a soap box for Kyle as he explores why and how we might track an appropriate amount of data to be able to make better software and systems more suited for the users. |

||

Fri, 5 June 2015

Elizabeth Lee from CyArk joins us in this episode to share stories of the work done capturing important historical sites digitally. CyArk is a non-profit focused on using technology to preserve the world's important historic and cultural locations digitally. CyArk's founder Ben Kacyra, a pioneer in 3D capture technology, and his wife, founded CyArk after seeing the need to preserve important artifacts and locations digitally before they are lost to natural disasters, human destruction, or the passage of time. We discuss their technology, data, and site selection including the upcoming themes of locations and the CyArk 500. Elizabeth puts out the call to all listeners to share their opinions on what important sites should be included in The Cyark 500 Challenge - an effort to digitally preserve 500 of the most culturally important heritage sites within the next five years. You can Nominate a site by submitting a short form at CyArk.org Visit http://www.cyark.org/projects/ to view an immersive, interactive experience of many of the sites preserved. |

||

Fri, 29 May 2015

Linhda and Kyle review a New York Times article titled How Your Hometown Affects Your Chances of Marriage. This article explores research about what correlates with the likelihood of being married by age 26 by county. Kyle and LinhDa discuss some of the fine points of this research and the process of identifying factors for consideration. |

||

Thu, 21 May 2015

With the advent of algorithms capable of beating highly ranked chess players, the temptation to cheat has emmerged as a potential threat to the integrity of this ancient and complex game. Yet, there are aspects of computer play that are measurably different than human play. Dr. Kenneth Regan has developed a methodology for looking at a long series of modes and measuring the likelihood that the moves may have been selected by an algorithm. The full transcript of this episode is well annotated and has a wealth of excellent links to the things discussed. If you're interested in learning more about Dr. Regan, his homepage (Kenneth Regan), his page on wikispaces, and the amazon page of books by Kenneth W. Regan are all great resources. |

||

Thu, 14 May 2015

This week's episode dicusses z-scores, also known as standard score. This score describes the distance (in standard deviations) that an observation is away from the mean of the population. A closely related top is the 68-95-99.7 rule which tells us that (approximately) 68% of a normally distributed population lies within one standard deviation of the mean, 95 within 2, and 99.7 within 3. Kyle and Linh Da discuss z-scores in the context of human height. If you'd like to calculate your own z-score for height, you can do so below. They further discuss how a z-score can also describe the likelihood that some statistical result is due to chance. Thus, if the significance of a finding can be said to be 3σ, that means that it's 99.7% likely not due to chance, or only 0.3% likely to be due to chance. |

||

Fri, 8 May 2015

This week Noelle Sio Saldana discusses her volunteer work at Crisis Text Line - a 24/7 service that connects anyone with crisis counselors. In the episode we discuss Noelle's career and how, as a participant in the Pivotal for Good program (a partnership with DataKind), she spent three months helping find insights in the messaging data collected by Crisis Text Line. These insights helped give visibility into a number of different aspects of Crisis Text Line's services. Listen to this episode to find out how! If you or someone you know is in a moment of crisis, there's someone ready to talk to you by texting the shortcode 741741. |

||

Thu, 30 April 2015

Have you ever wondered what is lost when you compress a song into an MP3? This week's guest Ryan Maguire did more than that. He worked on software to issolate the sounds that are lost when you convert a lossless digital audio recording into a compressed MP3 file. To complete his project, Ryan worked primarily in python using the pyo library as well as the Bregman Toolkit Ryan mentioned humans having a dynamic range of hearing from 20 hz to 20,000 hz, if you'd like to hear those tones, check the previous link. If you'd like to know more about our guest Ryan Maguire you can find his website at the previous link. To follow The Ghost in the MP3 project, please checkout their Facebook page, or on the sitetheghostinthemp3.com. A PDF of Ryan's publication quality write up can be found at this link: The Ghost in the MP3 and it is definitely worth the read if you'd like to know more of the technical details. |

||

Mon, 27 April 2015

This episode contains converage of the 2015 Data Fest hosted at UCLA. Data Fest is an analysis competition that gives teams of students 48 hours to explore a new dataset and present novel findings. This year, data from Edmunds.com was provided, and students competed in three categories: best recommendation, best use of external data, and best visualization. |

||

Fri, 24 April 2015

For our 50th episode we enduldge a bit by cooking Linhda's previously mentioned "healthy" cornbread. This leads to a discussion of the statistical topic of overdispersion in which the variance of some distribution is larger than what one's underlying model will account for.

Direct download: MINI_Cornbread_and_Overdispersion.mp3

Category:miniepisode -- posted at: 12:19am PDT |

||

Thu, 16 April 2015

This episode overviews some of the fundamental concepts of natural language processing including stemming, n-grams, part of speech tagging, and th bag of words approach. |

||

Thu, 9 April 2015

Guest Youyou Wu discuses the work she and her collaborators did to measure the accuracy of computer based personality judgments. Using Facebook "like" data, they found that machine learning approaches could be used to estimate user's self assessment of the "big five" personality traits: openness, agreeableness, extraversion, conscientiousness, and neuroticism. Interestingly, the computer-based assessments outperformed some of the assessments of certain groups of human beings. Listen to the episode to learn more. The original paper Computer-based personality judgements are more accurate than those made by humansappeared in the January 2015 volume of the Proceedings of the National Academy of Sciences (PNAS). For her benevolent Youyou recommends Private traits and attributes are predictable from digital records of human behavior by Michal Kosinski, David Stillwell, and Thore Graepel. It's a similar paper by her co-authors which looks at demographic traits rather than personality traits. And for her self-serving recommendation, Youyou has a link that I'm very excited about. You can visitApplyMagicSauce.com to see how this model evaluates your personality based on your Facebook like information. I'd love it if listeners participated in this research and shared your perspective on the results via The Data Skeptic Podcast Facebook page. I'm going to be posting mine there for everyone to see.

Direct download: Computer_Based_Personality_Judgments_with_Youyou_Wu.mp3

Category:psychology -- posted at: 8:08pm PDT |

||

Thu, 2 April 2015

This episode explores how going wine testing could teach us about using markov chain monte carlo (mcmc). |

||

Fri, 20 March 2015

This episode introduces the idea of a Markov Chain. A Markov Chain has a set of states describing a particular system, and a probability of moving from one state to another along every valid connected state. Markov Chains are memoryless, meaning they don't rely on a long history of previous observations. The current state of a system depends only on the previous state and the results of a random outcome. Markov Chains are a useful way method for describing non-deterministic systems. They are useful for destribing the state and transition model of a stochastic system. As examples of Markov Chains, we discuss stop light signals, bowling, and text prediction systems in light of whether or not they can be described with Markov Chains. |

||

Fri, 13 March 2015

Nicole Goebel joins us this week to share her experiences in oceanography studying phytoplankton and other aspects of the ocean and how data plays a role in that science.

We also discuss Thinkful where Nicole and I are both mentors for the Introduction to Data Science course. Last but not least, check out Nicole's blog Data Science Girl and the videos Kyle mentioned on her Youtube channel featuring one on the diversity of phytoplankton and how that changes in time and space. |

||

Fri, 6 March 2015

This episode explores Ordinary Least Squares or OLS - a method for finding a good fit which describes a given dataset.

Direct download: MINI_Ordinary_Least_Squares_Regression.mp3

Category:miniepisode -- posted at: 12:43am PDT |

||

Thu, 26 February 2015

New York State approved the use of automated speed cameras within a specific range of schools. Tim Schmeier did an analysis of publically available data related to these cameras as part of a project at the NYC Data Science Academy. Tim's work leverages several open data sets to ask the questions: are the speed cameras succeeding in their intended purpose of increasing public safety near schools? What he found using open data may surprise you. You can read Tim's write up titled Speed Cameras: Revenue or Public Safety? on the NYC Data Science Academy blog. His original write up, reproducible analysis, and figures are a great compliment to this episode. For his benevolent recommendation, Tim suggests listeners visit Maddie's Fund - a data driven charity devoted to helping achieve and sustain a no-kill pet nation. And for his self-serving recommendation, Tim Schmeier will very shortly be on the job market. If you, your employeer, or someone you know is looking for data science talent, you can reach time at his gmail account which is timothy.schmeier at gmail dot com. |

||

Thu, 19 February 2015

The k-means clustering algorithm is an algorithm that computes a deterministic label for a given "k" number of clusters from an n-dimensional datset. This mini-episode explores how Yoshi, our lilac crowned amazon's biological processes might be a useful way of measuring where she sits when there are no humans around. Listen to find out how! |

||

Thu, 12 February 2015

Emre Sarigol joins me this week to discuss his paper Online Privacy as a Collective Phenomenon. This paper studies data collected from social networks and how the sharing behaviors of individuals can unintentionally reveal private information about other people, including those that have not even joined the social network! For the specific test discussed, the researchers were able to accurately predict the sexual orientation of individuals, even when this information was withheld during the training of their algorithm. The research produces a surprisingly accurate predictor of this private piece of information, and was constructed only with publically available data from myspace.com found on archive.org. As Emre points out, this is a small shadow of the potential information available to modern social networks. For example, users that install the Facebook app on their mobile phones are (perhaps unknowningly) sharing all their phone contacts. Should a social network like Facebook choose to do so, this information could be aggregated to assemble "shadow profiles" containing rich data on users who may not even have an account. |

||

Thu, 5 February 2015

The Chi-Squared test is a methodology for hypothesis testing. When one has categorical data, in the form of frequency counts or observations (e.g. Vegetarian, Pescetarian, and Omnivore), split into two or more categories (e.g. Male, Female), a question may arise such as "Are women more likely than men to be vegetarian?" or put more accurately, "Is any observed difference in the frequency with which women report being vegetarian differ in a statistically significant way from the frequency men report that?" |

||

Fri, 30 January 2015

My quest this week is noteworthy a.i. researcher Randy Olson who joins me to share his work creating the Reddit World Map - a visualization that illuminates clusters in the reddit community based on user behavior. Randy's blog post on created the reddit world map is well complimented by a more detailed write up titled Navigating the massive world of reddit: using backbone networks to map user interests in social media. Last but not least, an interactive version of the results (which leverages Gephi) can be found here. For a benevolent recommendation, Randy suggetss people check out Seaborn - a python library for statistical data visualization. For a self serving recommendation, Randy recommends listeners visit the Data is beautiful subreddit where he's a moderator. |

||

Thu, 22 January 2015

When dealing with dynamic systems that are potentially undergoing constant change, its helpful to describe what "state" they are in. In many applications the manner in which the state changes from one to another is not completely predictable, thus, there is uncertainty over how it transitions from state to state. Further, in many applications, one cannot directly observe the true state, and thus we describe such situations as partially observable state spaces. This episode explores what this means and why it is important in the context of chess, poker, and the mood of Yoshi the lilac crowned amazon parrot.

Direct download: MINI_Partially_Observable_State_Spaces.mp3

Category:miniepisode -- posted at: 11:41pm PDT |

||

Thu, 15 January 2015

My guest this week is Anh Nguyen, a PhD student at the University of Wyoming working in the Evolving AI lab. The episode discusses the paper Deep Neural Networks are Easily Fooled [pdf] by Anh Nguyen, Jason Yosinski, and Jeff Clune. It describes a process for creating images that a trained deep neural network will mis-classify. If you have a deep neural network that has been trained to recognize certain types of objects in images, these "fooling" images can be constructed in a way which the network will mis-classify them. To a human observer, these fooling images often have no resemblance whatsoever to the assigned label. Previous work had shown that some images which appear to be unrecognizable white noise images to us can fool a deep neural network. This paper extends the result showing abstract images of shapes and colors, many of which have form (just not the one the network thinks) can also trick the network.

Direct download: Easily_fooling_deep_neural_networks_1.mp3

Category:deep neural networks, image recognition -- posted at: 8:04pm PDT |

||

Thu, 8 January 2015

This episode introduces a high level discussion on the topic of Data Provenance, with more MINI episodes to follow to get into specific topics. Thanks to listener Sara L who wrote in to point out the Data Skeptic Podcast has focused alot about using data to be skeptical, but not necessarily being skeptical of data. Data Provenance is the concept of knowing the full origin of your dataset. Where did it come from? Who collected it? How as it collected? Does it combine independent sources or one singular source? What are the error bounds on the way it was measured? These are just some of the questions one should ask to understand their data. After all, if the antecedent of an argument is built on dubious grounds, the consequent of the argument is equally dubious. For a more technical discussion than what we get into in this mini epiosode, I recommend A Survey of Data Provenance Techniques by authors Simmhan, Plale, and Gannon. |

||

Fri, 2 January 2015

I had the change to speak with well known Sharon Hill (@idoubtit) for the first episode of 2015. We discuss a number of interesting topics including the contributions Doubtful News makes to getting scientific and skeptical information ranked highly in search results, sink holes, why earthquakes are hard to predict, and data collection about paranormal groups via the internet. |

||

Data Skeptic

Categories

metadataminiepisode

advertising

medicine

general

wikipedia

art

financial

gaming

statistics

skepticism

data science

socialweb

love

econometrics

deep neural networks, image recognition

data viz

privacy

open data

psychology

audio

data philanthropy

measurement

gmo

civic data science

Archives

AprilMarch

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

April

March

February

January

December

November

October

September

August

July

June

May

| S | M | T | W | T | F | S |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | |||

| 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| 12 | 13 | 14 | 15 | 16 | 17 | 18 |

| 19 | 20 | 21 | 22 | 23 | 24 | 25 |

| 26 | 27 | 28 | 29 | 30 | ||

Syndication